Thanks. I think I should better describe the use cases:

(1) for example, I use an overdrive pedal, and I want to turn it on and off using my LPD8, but the pad in the LPD8 generate a velocity-sensitive CC, while the midilearn expect on to be CC with value ≥ 64 in order to be on: this forces me to press hard on the PAD to generate an ON event (no problem for the OFF event, which is 0 on the LPD8), therefore I need a way to change CC values 1 and above to be changed to 127 and the output of that to be used to control the pedal switch;

(2) for example, I want to be able to change the same “drive” value from both the LPD8 and the FCB1010 foot controller.

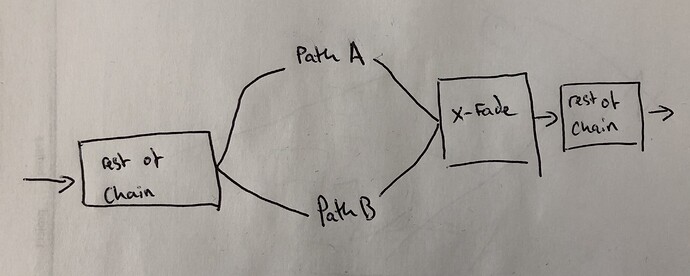

(3) for example, I want to be able to change the “drive” value from 0 to MAX, while adjusting the Tinygain after it from 60% down to 40% simultaneously (i.e. one controller to control 2 potentiometer, and more, with different scale/direction).

So far, none of this is possible with just using midilearn (at least, not with 1.8, which is what my test MODEP setup uses).

On a real MOD device, from 1.9, it looks like I would be able to do some (or all?) of these use cases by using CV: using CC to affect MIDI-to-CV plugins, manipulate then the CV signal as wanted, and getting the drive and tinygain to use the named CV output for controlling the parameter.

Is this so? How much CPU would I consume by going this way ? The original midi events might be pretty rare, but I understand the CV plugins themselves would provide a CV signal and run continuously. Also, this might mean I need to have a way to easily replicate the MIDI-to-CV setup easily from pedalboard to pedalboard.

It would therefore be nice to have the capability (if not added already in 1.9 - I couldn’t find the details whether it’s there already) to name MIDI plugin output ports, like we can name CV output ports, and when assigning to controls, telling the UI to listen to that MIDI plugin output port only.

In the meantime, when I have the time to do it (probably over Christmas), I’ll try to test another way altogether, and use the “mididings” tool, that would create in & out interfaces between which I can run arbitrary python scripts enriched with MIDI event manipulation rules… it should allow me to fulfil all the use cases.

It might be a bit cumbersome to first create the rule, and also to make sure the midilearn functionality of the MOD UI gets the right event (ie the one from the mididings output, rather than the one from the source controller), but that should be doable with getting mididings to generate the same CC event from a NoteOn event for example.

Then, of course, the big question will then be how to integrate that with the MOD dwarf when it comes (eg. would I be able to run mididings on the dwarf? or would I have to create a bit more complicated MIDI routing setup to send all my MIDI events to the Raspberry Pi and back to the dwarf once they are modified?), but that’ll wait until the MOD Dwarf comes.

]

]

Some of it may have to wait for the Dwarf to come (and it looks like v1.10 is going to bring a lot of new nice features, this is quite interesting!)

Some of it may have to wait for the Dwarf to come (and it looks like v1.10 is going to bring a lot of new nice features, this is quite interesting!)